With new hardware, software and artificial intelligence technology, Google expects significant improvements in the camera Pixel 7 Pro smart phone. And CNET has an exclusive deep dive into exactly what the company has done.

Partly because of this imaging technology, Google is putting its $899 Android flagship phone in direct competition against the $1,099 Apple iPhone 14 Pro Max, although the smaller $999 iPhone 14 Pro has a Same hardware camera.

Google’s Pixel phones haven’t sold well compared to Samsung and Apple models. But they get high marks in photography year after year. And if there is one thing that will attract customers, it will be camera technology.

Last year’s Pixel 6 Pro was equipped with a “camera strip” featuring three rear cameras – the 50MP main camera, 0.7x ultra-wide angle and 4x zoom. Pixel 7 models keep the same 50MP camera but include it in the redesigned camera bar. On the Pixel 7 Pro, the ultrawide sensor has the same sensor as last year but gets a macro mode, autofocus and 0.5x wider zoom. The 7 Pro’s telephoto zoom extends to 5x, and a new 48MP telephoto sensor enables 10x zoom mode without using any digital zoom tricks. Both phones get a new front-facing selfie camera.

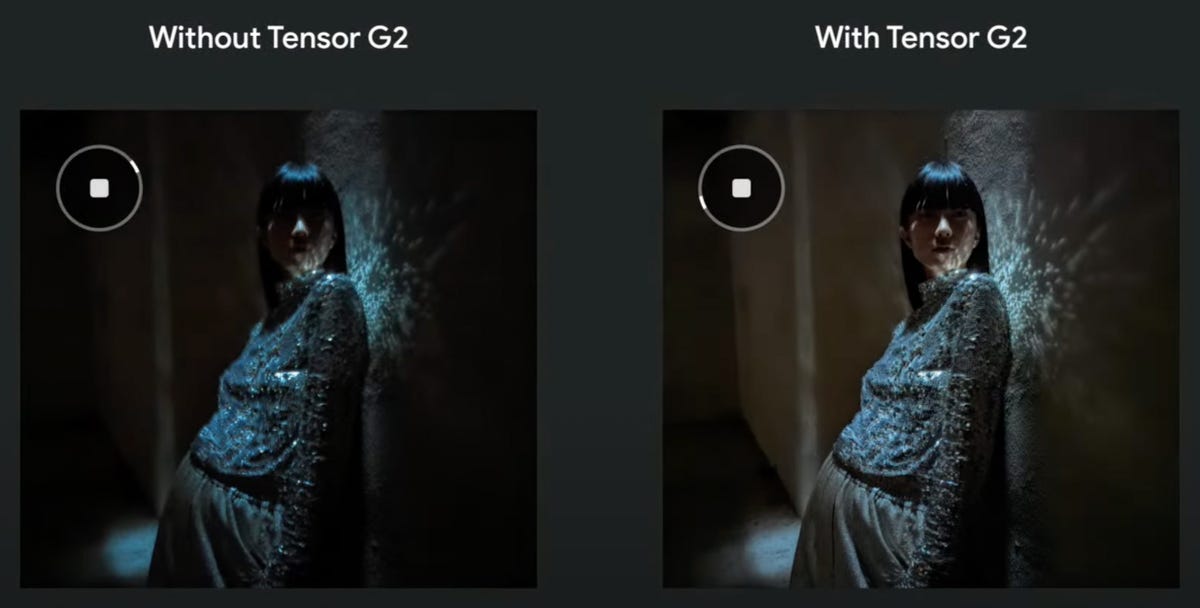

But the Pixel 7 Pro’s improved hardware foundation is only part of the story. The new computational imaging technology is enabled by new AI algorithms and Google’s new Tensor G2 processor It speeds up Night Sight, cancels out facial noises, improves video stability, and combines data from multiple cameras to improve image quality for medium zoom levels such as 3x. This is very similar to how a traditional camera behaves.

“What we really tried to do was give you a 12mm to 240mm camera,” said Alexander Schiffhauer, Pixel Camera Hardware Leader, who has translated the Pixel 7 Pro’s 0.5x to 10x zoom range into traditional 35mm camera terminology. “This is like a Holy Grail travel lens,” a multi-purpose setup longtime photo enthusiasts have enjoyed for its portability and flexibility.

Here’s a deeper look at what Google intends to do.

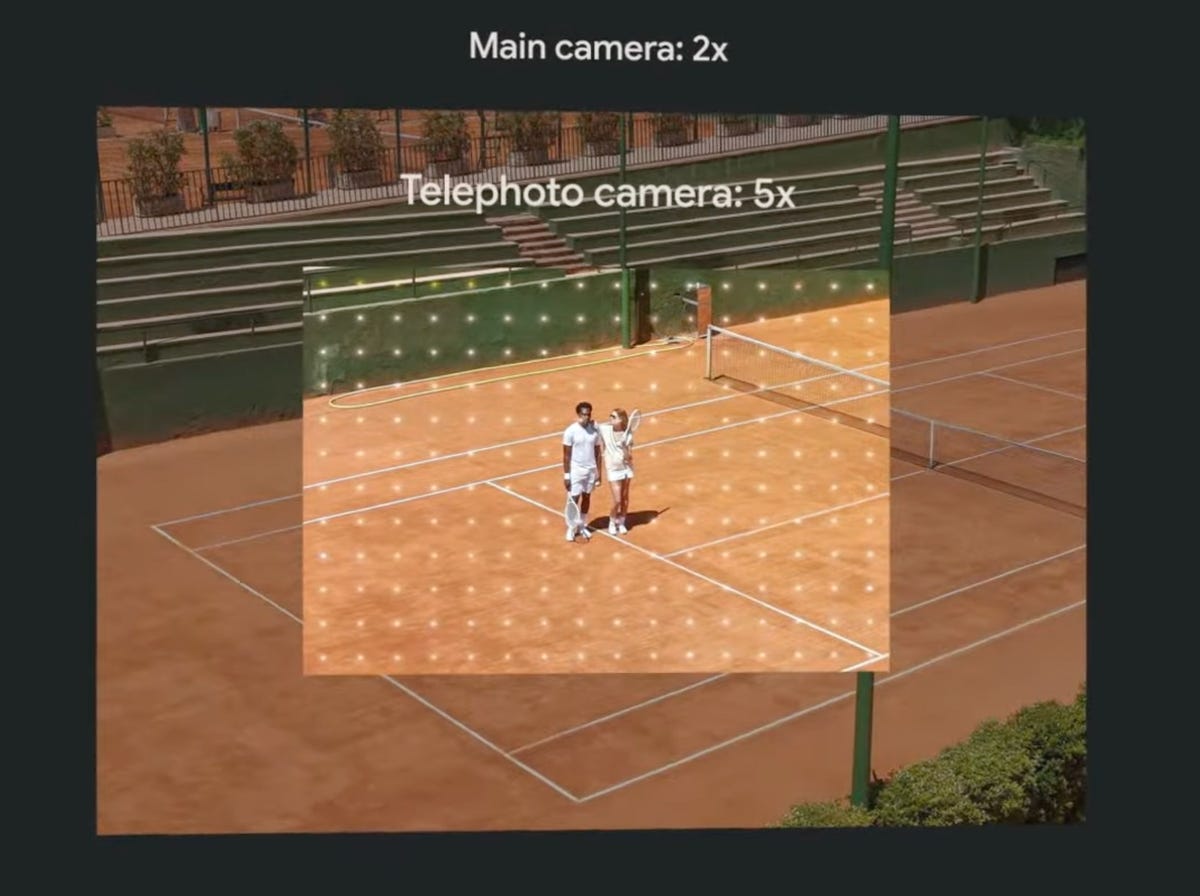

2x and 10x virtual cameras on the Pixel 7 Pro

High-end smartphones now come with at least three rear cameras so you can take a wider range of shots. Ultra wide angle cameras are good for photographing people crowded into an interesting room and buildings. Telephoto cameras are better for portraits and distant subjects.

But a large gap between zoom levels can be a problem. The 50MP main and 48MP telephoto cameras on the Pixel 7 Pro provide something of a fix.

These cameras usually use a technique called pixel binning to convert a 2×2 grid of pixels into one larger pixel. This makes 12MP shots with better color and a wider dynamic range than dark and light tones.

But by skipping pixel binning and using only the central 12MP units, a 1x main camera can take a 2x shot, and a 5x telephoto can take a 10x shot. Smaller pixels mean the image quality isn’t as high, but it’s still useful, and Google applies Super Res Zoom technology to improve color and sharpness as well. (2x mode works on Google’s Pixel 7, too.)

Apple has taken exactly the same approach with its iPhone 14 Pro phones, but only with the 1x main camera.

The Pixel 7 Pro 1x camera can take photos enlarged to 2x with the center pixel, and the 5x camera can take 10x photos.

The Google; Shot by Stephen Shankland/CNET

Where Apple lets you shoot all the way 48MP Photos with iPhone 14 Pro1x camera. At 1x, Google always uses pixel binning and therefore only captures 12 megapixels.

Read more: Pixel 7, Pixel 7 Pro and Pixel Watch: Everything Google just announced

Zoom fusion for more image flexibility

When shooting between 2.5x and 5x zoom, the Pixel 7 Pro mixes image data from the wide and telephoto cameras to improve image quality. Schiffhauer said this improves the photos compared to just digital upscaling the photo from the main camera.

But it is difficult. The phone has to reconcile the two camera perspectives, which means that the foreground objects obscure the elements in the background differently. You can see for yourself by covering one eye and then the other to see how the scenery changes. The two cameras also focus differently due to their different focal lengths.

To avoid interruptions, Google uses artificial intelligence, also known as machine learning, and other processing techniques to figure out which parts of each image should be included or rejected.

Zoom fusion occurs after other processing methods. This includes HDR+, which combines multiple frames into a single image for better dynamic range, and an AI algorithm that monitors hand shake to take pictures when the camera is more stable.

A technology called Zoom fusion improves the quality of images captured between 2.5x and 5x Zoom by adding 5x camera pixels to the central part of the image. AI helps align the two perspectives and reconcile differences.

The Google; Shot by Stephen Shankland/CNET

Unfortunately for those who like to take raw images, an image format that offers higher quality and more editing flexibility, zoom merge isn’t an option out there. You’ll only get full 12MP raw photos at the Pixel 7 Pro’s fixed zoom levels of 0.5x, 1x, 2x, 5x and 10x.

The new flawless Pixel 7 Pro technology

Google introduced technology in 2021 to pair data from flagship and ultrawide cameras to deal with motion blur in faces that can spoil photos. This face-blurring technology now works three times more often, said Isaac Reynolds, Pixel Camera Program Leader.

Specifically, it will work more often in normal light, activate more when it’s dim, and work even on faces that aren’t in focus.

To process photos after the fact, Google Photos is getting a new tool to de-distort footage. It even works with digital film images from the age of ancient analog photography.

Eye detection and other AF upgrades

The Pixel 7 Pro has many autofocus improvements, from adding autofocus hardware to the ultra-wide camera. For all cameras, Google now uses an AI algorithm to process focus data from the image sensor.

The camera will also be able to identify eyes, not just faces, for autofocus that works more like found in high-end cameras from companies like Sony, Nikon, and Canon.

The new AI technology can also focus better when people are moving in a scene. “If someone turns their head away from the camera or walks away, we can keep the focus on their head,” Reynolds said.

Pixel 7 phones also perform best when faces are hard to recognize, like big hats or really big dark sunglasses.

And the new AI-based AF technology makes the switch to telephoto much faster. The Pixel 6 Pro often pauses when its telephoto camera is activated.

Read more: Pixel 7 vs Pixel 6: Comparing Google’s flagship phones

Image level optimization

From 5x to 10x, the Pixel 7 Pro uses the 5x central camera pixels to capture a 12MP image.

With digital zoom methods, the camera can reach a zoom of up to 30x, higher than the 20x on the Pixel 6 Pro. Google has developed a method called Super Res Zoom that takes advantage of hand shaking to gather more detailed data on a subject and zoom in better.

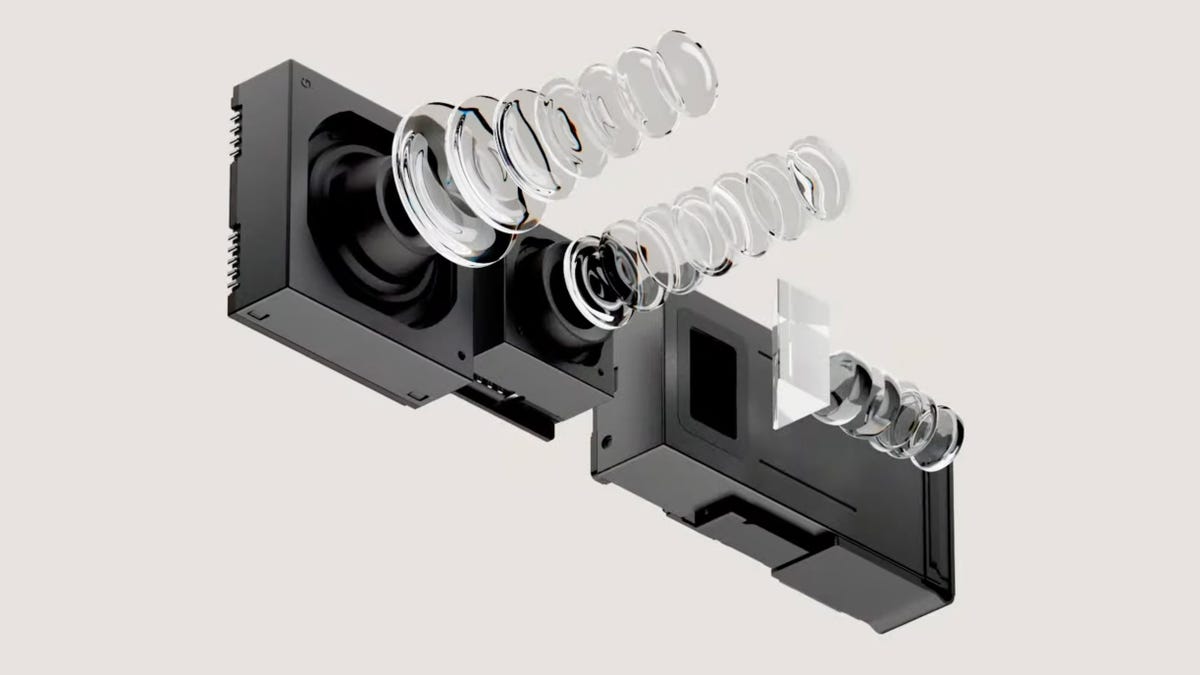

The Pixel 7 Pro has three rear cameras: a 1x main camera with a 50MP sensor, a 0.5x ultrawide camera that can now also take macro photos, and a 5x periscope telephoto camera that reflects light. Inside the phone body to accommodate the taller optics required.

The Google; Shot by Stephen Shankland/CNET

Google’s digital zoom can also use artificial intelligence techniques to enlarge images. This year, Google trained its AI to better predict new pixels. The phone also calculates a scene feature called an anisotropic kernel to better predict subtle changes from a single pixel to neighbors to better populate new data while zooming in.

“Obviously, the quality of 30x will not be quite the same as it was at 10x,” Schiffhauer said. “But you still get these really nice pictures that you can share.”

Faster night photos

Night Sight, the groundbreaking and now widely copied technology to take better photos when it’s dark or dark, is twice as fast. That’s because Google uses the photo frames it collects before you hit the shutter-release button. (This is possible because the camera is constantly collecting photos and caches them in memory, only keeping them if you actually took a photo.)

“Users wait half the time to take a Night Sight photo,” Reynolds said. “They get much more meaningful results, and they don’t pay any penalty for the noise.”

Google’s Tensor G2 processor doubles Night Sight photo speeds on the Pixel 7 and Pixel 7 Pro, capturing some frames for an image earlier and reducing noise through more efficient AI processing.

The Google; Shot by Stephen Shankland/CNET

For Pixel Line astrophotography mode – the extreme version of Night Sight – new AI noise spot removal technology now preserves stars better.

Better stability and other video improvements

Google has also fixed the video of the Pixel 7 cameras, a weak point compared to iPhones in the eyes of many reviewers. For starters, all Pixel 7 Pro cameras capture up to 60fps in 4K now, but there’s a lot more:

- Cameras can shoot in 10-bit HDR (High Dynamic Range) mode for better high-contrast scenes, such as those with bright sky or dark shadows.

- Google is now working on the eighth generation of video stabilization technology. It especially helps when tracking moving subjects with heavy zooming.

- Speech enhancement technology captures people’s voices better when shooting with rear cameras.

- More serious videographers can lock in white balance, exposure, and focus – previously only possible with photos.

- Cinematic blur lets you artificially blur backgrounds in video, a feature previously only available for photos.

- When copying iPhone style, time-lapse videos are always 15-30 seconds long. Previously, you had to calculate the best settings yourself.

- And by using a technology called blur injection, the Pixel 7 Pro can smooth out the jittery video you sometimes get when it’s bright and shutter speeds are really fast.

Together, the improvements show that Google is struggling hard to maintain its leading smartphone camera technology. “We are pushing hardware, software, and machine learning as far as possible,” Schiffhauer said.

Correction, 7:52 am Oct 7: This story incorrectly described the 50MP main camera of the Pixel 7 and 7 Pro phones. The camera is the same as that used on the Pixel 6 models.

“Infuriatingly humble music trailblazer. Gamer. Food enthusiast. Beeraholic. Zombie guru.”

More Stories

Fallout 4 Next Gen Update release date: When will it arrive?

Apple releases open source AI models that run on the device

Windows 11 now comes with its own adware